Building Concept Detectors using Noisy Clickthrough Data

Image clickthrough data are the collected from the search logs of image search engines and they consist of the images and queries the users clicked during their image search. This implicit feedback is very noisy and direct use of clickthrough data as annotations to train concept detectors results in poor performance.

In our work, we study methods for modeling the noise levels of automatically constructed training sets from clickthrough data and methods for training noise-resilient concept detectors based on weighted SVM variants. Impressive performance gains and noise robustness is achieved by introducing the noise level of each image into the training procedure through appropriate sample weights. The performance gains of weighted SVM concept detectors is up to 130% compared to concept detectors trained with standard SVM (i.e., without weights).

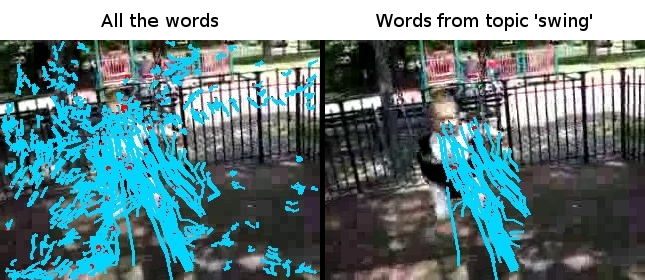

Discovering micro-events from video data using topic modeling

This research proposes a method to decompose events, from large-scale video datasets, into semantic micro-events by developing a new variational inference method for the supervised LDA (sLDA), named fsLDA (Fast Supervised LDA). Class labels contain semantic information which cannot be utilized by unsupervised topic modeling algorithms such as Latent Dirichlet Allocation (LDA). In case of realistic action videos, LDA models the structure of the local features which sometimes can be irrelevant to the action. On the other hand, sLDA is intractable for large-scale datasets in regards to both time and memory. fsLDA not only overcomes the computational limitations of sLDA and achieves better results with respect to classification accuracy but also enables the variable influence of the supervised part in the inference of the topics.

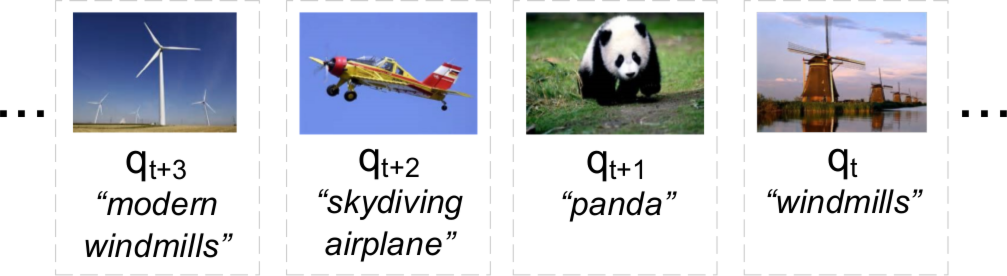

Online-trained image concept detectors from streaming clickthrough data

Our method for training noise-resilient concept detector from clickthrough data has been extended to online learning setting where the concept detectors are constantly updated using streaming clickthrough data. Each click is used individually for model updating only when it becomes available. The noise robustness and high performance is achieved through an implicit weighting mechanism in the feature space according to the number of the – constantly incoming – clicks.

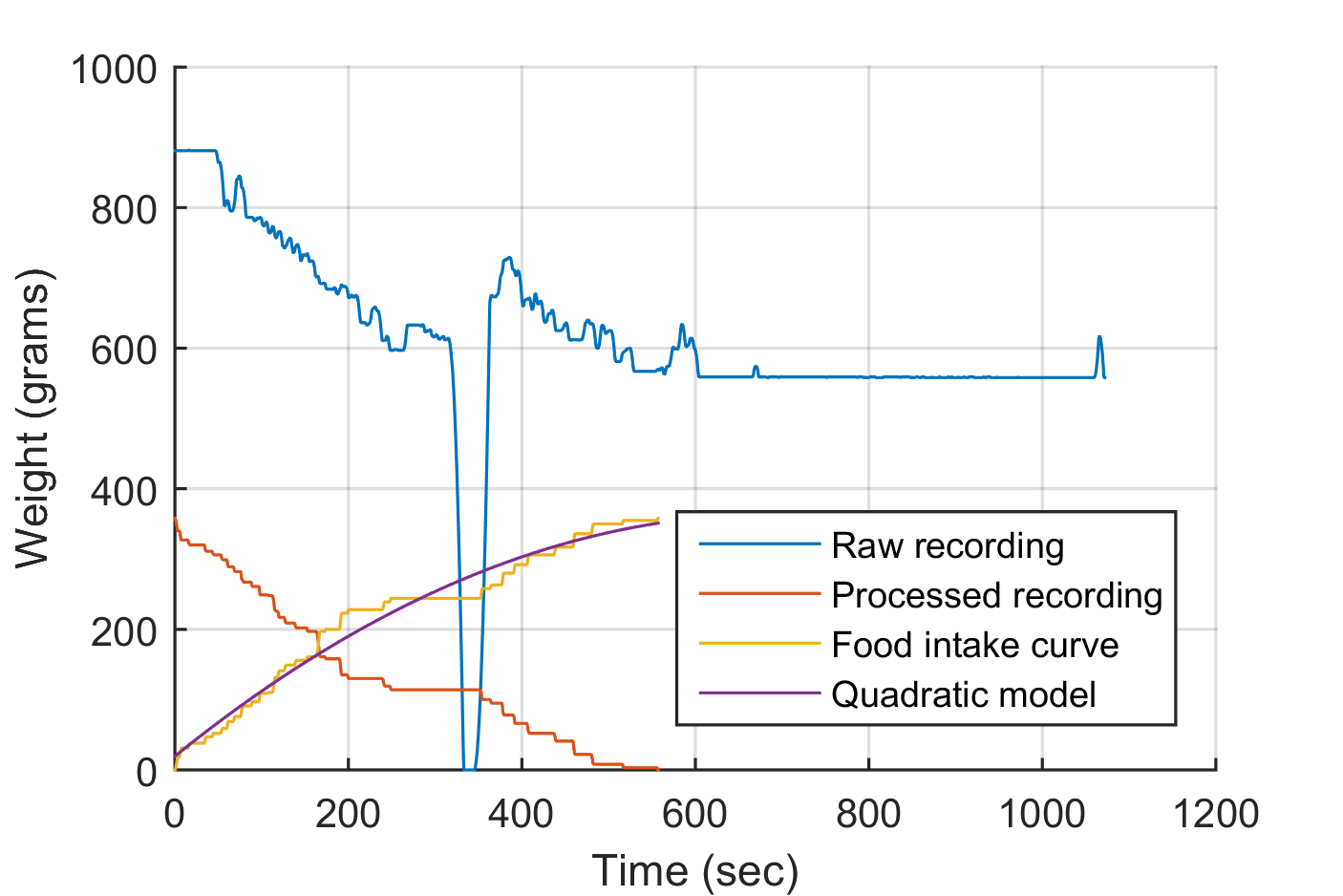

Automatic Food Intake Measurements using Mandometer

Monitoring and modification of eating behaviour through continuous meal weight measurements using the Mandometer has been successfully applied in clinical practice to treat obesity and eating disorders in Mando clinics. Analysis of im-meal recordings has identified two pattens: decelerated and linear eating. In the context of the SPLENDID project, the use of Mandometer outside the clinic is enabled by automating the processing of the raw Mandometer recording and extracting eating-releated behavioural indicators.

To this end, we have developed a set of algorithms and performed extensive evaluation on actual clinical data. Developed algorithms include a heuristic rule-based approach, a greedy algorithm that uses quadratic fitting error to rank possible meal-curve interpretations, a combination of both, and finally an approach based on modelling meal events (such as bites, food additions, fork/knife resting on the plate) using parametric, probabilistic, context-free grammar (PPCFG).

Results indicate that accurate analysis can be performed, especially with the PPCFG based algorithm. Thus, an Android implementation has been developed that uses the latest Bluetooth-enabled Mandometer to bring automatic eating behaviour analysis to the user.

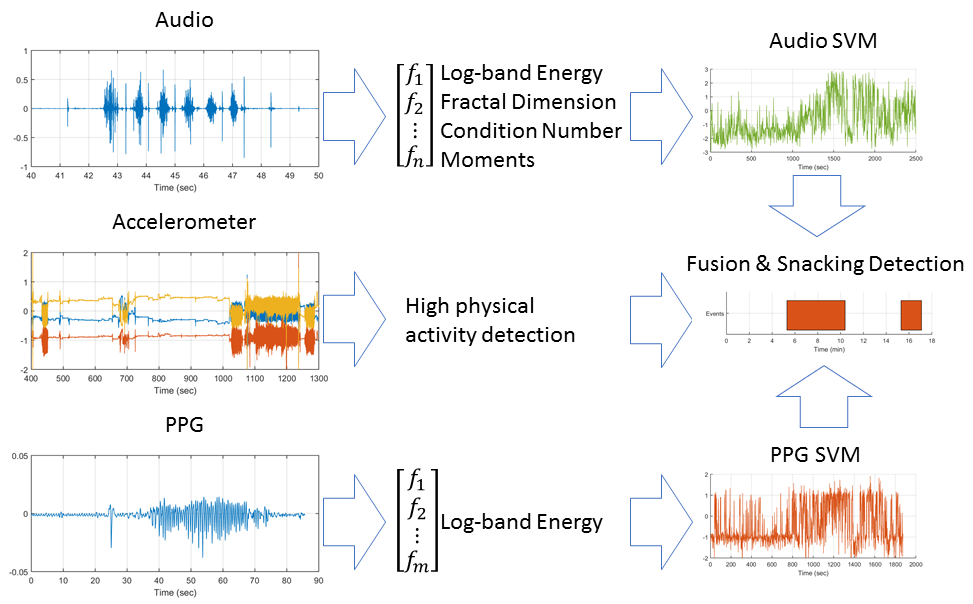

Chewing Detection using Audio and PPG Sensors

In the context of dietary management, accurate monitoring of eating habits has received increased attention. The use of novel wearable sensors, especially microphone sensors placed in the outer ear canal, to detect chewing or swallowing sounds is currently studied extensively. In the context of the SPLENDID project, we are employing a combination of microphone and photoplythesmography (PPG) sensors, integrated into a conveniently worn housing, attached to a accelerometer-enabled data logger.

The processing pipeline initially process audio and PPG signals independently, by pre-processing and extracting noise-resilient and amplification-independent features. Features are then used with support vector machines; the output scores are smoothed and fused together to provide the final detection signal. A post processing algorithm aggregates the detected chewing activity which corresponds to short chewing bouts into eating events such as snacks or meals. In addition, the accelerometer which is integrated into the data logger provides physical activity level estimates, that are used to further increase the method’s precision.

In-meal food intake analysis using inertial sensors

Automatic objective monitoring of eating behavior using inertial sensors is a problem that has received a lot of attention recently, mainly due to the mass availability of IMU-enabled wearable devices and the evidence on the importance of quantifying and monitoring eating patterns. Towards this goal we have designed and developed a number of algorithms that make use of the accelerometer and gyroscope signals from an off-the-shelf smartwatch. Our approach is based on the segmentation of a meal session into multiple intake moments called food intake cycles. Subsequently, each food intake cycle is segmented into multiple eating related hand movements called micromovements. Experimental results on our publicly available FIC dataset exhibit high in-meal food intake detection accuracy.